We are a design and innovation company.

We create products and experiences that grow ambitious brands.

We navigate what's next at the intersection of creativity and tech innovation.

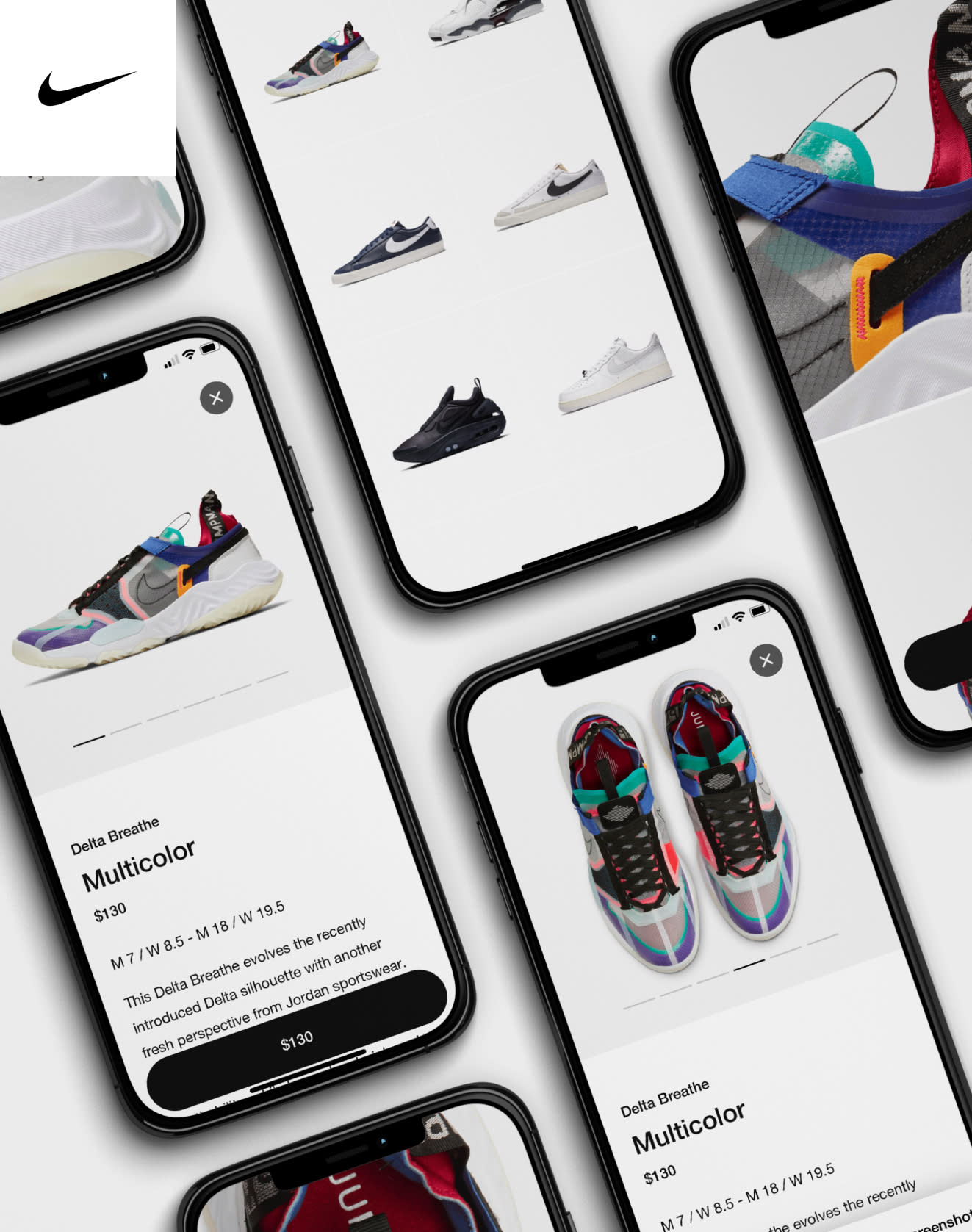

Design experiences that people love.

We create immersive, human-centric experiences that build brand love and fame.

Uncover new sources of growth.

We challenge the status quo to discover previously untapped areas of revenue.

Unlock advantage with emerging technology.

We harness AI and leading-edge technology to create highly personalized connections.

- GooglePartnering with Google to drive their biggest bets, from everyday products to platforms of the future.100+Engagements with Google products, brands and teams served.

- VerizonBringing truth to a world of misinformation through an innovative tool, with transparency at its core.1billion media impressions.

- McDonald’sReinventing McDonald’s ordering experience into a multi-billion dollar sales channel.40%of sales attributed to digital alone, in 2023 (Q1).

Harnessing the power of AI to drive growth and engagement.

We've created a suite of proprietary AI and ML tools that deploy real-time data to maximize ROI.

0 - 0

Makers #1: Cavan Huang & André Souza

Working to improve the digital experience at Planet Fitness, our Group Creative Director and VP of Strategy forged a winning partnership. In the process, they discovered new ways of working and realized the importance of visualizing value for the client.

Find growth for your brand.